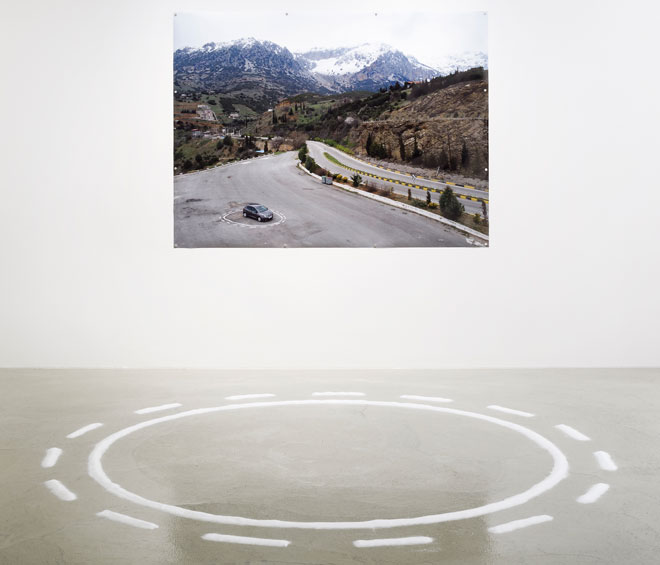

James Bridle. Failing to Distinguish Between a Tractor Trailer and the Bright White Sky, installation view, Nome Gallery, Berlin, 2017.

by KRISTIAN VISTRUP MADSEN

In 2011, British artist and writer James Bridle (b1980) began developing the concept of the “new aesthetic”. It has caused some confusion since it is not about aesthetics in the art historical sense, but literally what things look like; what the aesthetic surfaces of seemingly opaque technologies reveal about the underlying systems that produce them. Working across writing, photography, installation and software development, Bridle produces interactional and interventional situations that explore the employment of technology in exercising and upholding power structures.

These efforts often begin with making these power structures visible. From 2012 onwards, he made a series of works that outlined the shapes of drones, satellites and aeroplanes in streets and public spaces, making the ominous presence of flying devices evident on the ground. Then, in 2015, he tackled immigration and detention in the UK in his project Seamless Transitions. It is illegal in Britain to photograph the closed courts and detention centres that deal with immigrants and the specially designated airport lounges from which people are deported and Seamless Transitions digitally rendered the physical infrastructures of these places.

In 2016, following the EU referendum, the Serpentine Gallery commissioned Cloud Index, a system that uses machine learning to predict voting results based on the weather. Machine learning is different from artificial intelligence in that it mimics the structure of the brain. This is the exhilarating frontier of technological agency: the idea that computers can make decisions that they were not explicitly programmed to make.

A fascination with machine learning is also the jumping-off point for Bridle’s current exhibition at the Nome Gallery in Berlin, Failing to Distinguish Between a Tractor Trailer and the Bright White Sky. The show’s title stems from an accident report into a crash involving a self-driving car. I met Bridle as he was painting a circle like a road-surface marking on to the floor of the Nome Gallery’s new space in Kreuzberg.

Kristian Madsen: In making the current show at Nome, your artistic process has been one of self-education; teaching yourself how to write your own autonomous car software. How did this come about?

James Bridle: There’s a lot of fear around technology and a lot of anti-intellectualism that says that developing it is for other people to do, and I think that it’s for everyone to do. For Cloud Index, I worked with other programmers to make the technology, which is something I always find a little bit frustrating because, at the end of it, I don’t fully know what I’m doing. It’s like taking an idea and asking someone else to draw it, or to paint it. I’m not really satisfied unless I understand what’s being done, and how it works. So this project was about saying: “All right, now I need to fully learn how to do this and understand it myself.” Now, I understand it: I understand what you can do with it, which, in this case, is to do other things. My self-driving car was trained not to stick nicely to one set route, but to get itself lost. So it tries to take every turn that comes its way. When you build yourself, you can build in other possibilities.

KM: The exhibition opens with Untitled (Autonomous Trap 001), a photograph in which a car stands inside a circle of road surface marking. You write that you are interested in “how to appropriate contemporary technologies for divergent purposes, and, when necessary, how to resist them”. Can you flesh out the relationship between this statement and the image?

JB: If you can understand a certain amount about a machine’s view of the world, you can devise things that it relates to, in this case a trap. As much as you can do to build your agency through these technological structures, at some point they might just need to be stopped. And to keep open the possibility of stopping them, in an age of technological determinism, is actually quite a radical act. That’s what the trap does. To program, and to reprogram, and functionally to understand systems, is a radical act. In that sense, this image is about resistance.

KM: What do you mean by the term “technological determinism”?

JB: In the case of self-driving cars, the technological determinism line is: “We have the technology to do it, therefore we are going to do it.” And also, following on from that, the social effects that it will cause, like massive job losses, are just what’s going to happen, and there’s nothing we can do about it. The growth of the technology itself just presupposes and creates this future. I don’t believe in technological determinism; there’s is a huge amount of things we can use this technology for, or not use it for, that are up to us.

KM: In many ways, your work is about interfering with technology as a way of getting to know it.

JB: This aspect of the work is about meeting technology halfway. Machine-learning, increasingly, is in everything: it’s in your banking system – it decides whether you get a mortgage; it’s in Amazon – it decides what you’re going to end up buying in quite important ways. It makes decisions, but the specific reasons for those decisions are almost impossible to know. So how do we find a kind of common ground where we can understand each other? Where the trap is a form of resistance that is kind of offensive, I’m interested it finding that middle point as well.

KM: Can the autonomous car then be seen as a type of post-human agent, and our interaction with it as one with another thing of agency?

JB: I’m probably less interested in the immediately post-human than in a relationship that breaks down the distinction between human and technological agency – that it’s not one or the other. There is a human behind the technology who has embedded existing politics and biases into it. It is not entirely machine-bred, but once we put these things into the world they can do strange things that are unexpected; they have a certain degree of their own agency. Several of the works, such as the Activation series, attempt to find a middle ground. Find a way for both human and machine to agree on an image of the world, and see the world, if not in the same way, then at least in a way that allows for some kind of negotiation.

KM: The Activationseriesis a work you produced for this show, using your own software, in which we see the stage-by-stage transformation of an image into data.

JB: You see an input image – a classical freedom image of an open road – as it slowly, to the human eye, degrades into seeming unreadable; pure ones and zeros. But that’s the point at which it’s actually readable to the machine, and not just readable but sensible: that’s the point at which the machine understands what it’s looking at. So it’s not just a passage of data from one side to the other, but a negotiation of sense-making of the world. I think there is a huge demand to humanise the technological view of the world, but also potential for humans to learn non-aggressive, less violent lessons from the technological as well.

KM: So when your autonomous car software allows you to get lost, is that a type of non-aggressive, more playful interaction we might have with technology?

JB: Absolutely. It’s also a fairly standard both artistic and mathematical technique for problem-solving, for coming to know the world. Going for a random walk, whether that means literally on the moors, or the mathematical function of a random walk, which is used in mathematical problem-solving by generating random numbers is a tried and tested technique for scoping out the space you’re in. And if we just sit in the driver-less car while it takes us where it wants us to go, then we’re obviously missing out on all these possibilities.

KM: In his essay accompanying the exhibition, Will Wiles writes of a time “when the automobile was capable of actually delivering on its promise of liberation”. There is some disappointment here, it seems, as to the promise of technology and modernity.

JB: One the one hand, there’s deep disappointment with this idea that the internet in particular would supply people with so much knowledge that we would somehow be magically better. Turns out, quite obviously, that’s not the case. The world is on fire, and that fire is the direct result of a confusion produced by this overwhelming amount of information. The world has always been complex, but it hasn’t been visibly this complex before. There’s no shutting that out now. For me, though, that still remains a hopeful point, because that is the way the world is: the world is complex, difficult and contradictory, technology is just showing us that. So we need to rethink this ontology of information. Technology forces us to deeply reconsider how we understand the world, and that, I think, is its most powerful value.

KM: This relates to what you have called a “need for new networked mythologies”. What is it that we can learn from mythology?

JB: One of the things that I have thought about a lot is the necessity of regaining a context and a culture and a literacy around doubt and uncertainty. Technological thinking drives out uncertainty. It says that it’s possible to gather enough data so that you can know everything and then make perfect decisions. That’s just a straight-up fallacy. It’s not working, and our belief in it is actually making things worse. And so to look for ways of talking about doubt and uncertainty can bring us outside an enlightenment position and take us back to other forms of storytelling, other ways of explaining the world. This is what mythology has been doing for thousands of years. When I say networked mythologies, I mean the ability to connect mythologies in new ways: to poke them together, to synthesise, and also to change them up.

KM: Gradient Ascent, which you show in the exhibition, is a video essay that follows your drive up Mount Parnassus in Greece while testing your software. Mount Parnassus is known in Greek mythology as the home of the muses. What is the relationship in this work between ancient myth and burgeoning technology?

JB: This work is also based on another allegory, René Daumal’s 1952 novel Mount Analogue. It’s philosophy considered as mountain-climbing, literally: about the nature of knowledge, and what it takes to acquire it. Mount Analogue is about a party of mountaineers who go to ascend this invisible mountain. Although invisible, by logic, this mountain, nevertheless, must exist. So they set out to find it, which they do and they go up it, and then Daumal dies, and doesn’t finish the book – which is kind of brilliant in itself. Because it is the home of the muses, in Greek mythology, to ascend Mount Parnassus is always to aspire to knowledge and to art itself. And therefore to drive the autonomous car up there is to insert a new figure in that mythology: technological agency. The film ends by asserting that, in fact, there are many mountains: beyond the mountains there are mountains..

KM: You have lived in Athens for a few years now, and the Greek landscape and mythology sets the stage for this exhibition. How have your experiences there influenced this body of work?

JB: One of the experiences of being in Greece is being a bit closer to practical social work, which is a lot more necessary, more visible on the ground, as well as a lot more accessible there. For me, this has freed the work up to become less didactic. I don’t have to put all my political statements so broadly and powerfully into the work – the work can actually try and do something else, and explore other avenues. Where previously this exhibition might have been a bit more shouty at Silicon Valley, it’s a more open-ended now, and I think that’s good for it.

• James Bridle: Failing to Distinguish Between a Tractor Trailer and the Bright White Sky is showing at Nome Gallery, Berlin until 29 July 2017.